Overview

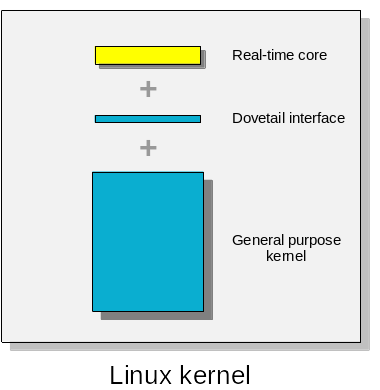

A dual kernel architecture. Like its predecessors in the Xenomai series, Xenomai 4 with EVL brings real-time capabilities to Linux by embedding a companion core into the kernel, which specifically deals with tasks requiring ultra low and bounded response time to events. For this reason, this approach is known as a dual kernel architecture, delivering stringent real-time guarantees to some tasks alongside rich operating system services to others. In this model, the general purpose kernel and the real-time core operate almost asynchronously, both serving their own set of tasks, always giving the latter precedence over the former.

In order to achieve this, the Xenomai 4 project works on four components:

-

a piece of inner kernel code - aka Dovetail - which acts as an interface between the companion core and the general purpose kernel. This layer introduces a high-priority execution stage on which tasks with real-time requirements should run. In other words, with the Dovetail code in, the Linux kernel can plan for running out-of-band tasks in a separate execution context which is not subject to the common forms of serialization the general purpose work has to abide by (e.g. interrupt masking, spinlocks).

-

a compact and scalable real-time core, which is intended to serve as a reference implementation for other dual kernel systems based on Dovetail.

-

a C library known as libevl, which gives C/C++ applications access to the real-time core services.

-

a Rust crate called revl, which gives Rust applications access to the real-time core services as well.

Originally, the Dovetail code base forked off of the I-pipe interface back in 2015, mainly to address fundamental maintenance issues with respect to tracking the most recent kernel releases. In parallel, a simplified variant of the Cobalt core once known as ‘Steely’ was implemented in order to experiment freely with Dovetail as the latter significantly departed from the I-pipe interface. At some point, Steely evolved into an SMP-scalable, easier to grasp and maintain real-time core which now serves as the reference implementation for Dovetail, known as the EVL core. The EVL core is at the heart of Xenomai 4.

When is a dual kernel architecture a good fit?

A dual kernel system should keep the functional overlap between the general purpose kernel and the companion core minimal, only to hand over the time-critical workload to a dedicated component which is simple and decoupled enough from the rest of the system for you to trust. Typical applications best-served by such infrastructure have to acquire data from external devices with only small jitter within a few tenths of microseconds (absolute worst case) once available, process such input over POSIX threads which can meet real-time requirements by running on the high-priority stage, offloading any non-(time-)critical work to common threads. Generally speaking, such approach like the EVL core implements may be a good fit for the job in the following cases:

-

if your application needs ultra-low response times and/or strictly limited jitter in a reliable fashion. Reliable as in « not impacted by any valid kernel or user code the general purpose kernel might run in parallel in a way which could prevent stringent real-time deadlines from being met ». Valid code in this case meaning not causing machine crashes; the companion core of a dual kernel system is not sensitive to slowdowns which might be induced by poorly written general purpose drivers. For instance, a low priority workload can put a strain on the CPU cache subsystem, causing delays for the real-time activities when it resumes for handling some external event:

-

if this workload manipulates a large data set continuously, causing frequent cache evictions. As the outer cache in the hierarchy is shared between CPUs, a ripple effect does exist on all of them, including the isolated ones.

-

if this workload involves many concurrent threads causing a high rate of context switches, which may get even worse if those threads belong to different processes (i.e. having distinct address spaces).

The small footprint of the dedicated core helps in this case, since less code and data are involved in managing the real-time system as a whole, lowering the impact of unfavorable cache conditions. In addition, the small core does not have to abide by the locking rules imposed on the general purpose kernel code when scheduling its threads. Instead, it may preempt it at any time, based on the interrupt pipelining technique Dovetail implements. This piece of information describes typical runtime situations when the general purpose workload is putting pressure on the overall system, regardless of the relative priority of its tasks.

-

-

if your application system design requires the real-time execution path to be logically isolated from the general purpose activities by construction, so as not to share critical subsystems like the common scheduler.

-

if resorting to CPU isolation in order to mitigate the adverse effect the non real-time workload might have on the real-time side is not an option, or once tested, is not good enough for your use case. Obviously, using such trick with low-end hardware on a single-core CPU would not fly since at least one non-isolated CPU must be available to the kernel for carrying out the system housekeeping duties.

What does a dual kernel architecture require from your application?

In order to meet the deadlines, a dual kernel architecture requires your application to exclusively use the dedicated system call interface the real-time core implements. With the EVL core, this API is libevl, or any other high-level API based on it which abides by this rule. Any regular Linux system call issued while running on the high-priority stage would automatically demote the caller to the low priority stage, dropping real-time guarantees in the process.

This rule has consequences when using C++ for instance, which requires to define the set of usable classes and runtime features which may be available to the application.

This said, your application may use any service from your favorite standard C/C++ library outside of the time-critical context.

Generally speaking, a clear separation of the real-time workload from the rest of the implementation in your application is key. Having such a split in place should be a rule of thumb regardless of the type of real-time approach including with native preemption, it is crucial with a dual kernel architecture. Besides, this calls for a clear definition of the (few) interface(s) which may exist between the real-time and general purpose tasks, which is definitely the right thing to do.

The basic execution unit the EVL core recognizes for delivering its services in user space is the thread, which commonly translates as POSIX threads in user space.

Which APIs are available for implementing applications?

A C language API also

known as libevl is available for programming real-time applications

which need to call the EVL core services.

A Rust crate is available for the same purpose.

Which API is available for implementing real-time device drivers?

The EVL core exports a kernel API for writing drivers, extending the Linux device driver interface so that applications can benefit from out-of-band services delivered from the high-priority execution stage.

The basic execution unit the EVL core recognizes for delivering its services in kernel space is the kthread, which is a common Linux kthread on EVL steroids.

Porting Dovetail

If you intend to port Dovetail to:

-

some arbitrary kernel flavor or release.

-

an unsupported hardware platform.

-

another CPU architecture.

Then you could make good use of the following information:

-

first of all, the EVL development process is described in this document. You will need this information in order to track the EVL development your own work is based on.

-

detailed information about porting the Dovetail interface to another CPU architecture for the Linux kernel is given here.

-

the current collection of « rules of thumb » when it comes to developing software on top of EVL’s dual kernel infrastructure.

Implementing your own companion core

If you plan to develop your own core to embed into the Linux kernel for running POSIX threads on the high-priority stage Dovetail introduces, you can use the EVL core implementation as a reference code with respect to interfacing your work with the general purpose kernel. To help you further in this task, you can refer to the following sections of this site:

-

all documentation sections mentioned earlier about porting Dovetail.

-

a description of the so-called alternate scheduling scheme, by which Linux kernel threads and POSIX threads may gain access to the high-prority execution stage in order to benefit from the real-time scheduling guarantees.

-

a developing series of technical documents which navigates you through the EVL core implementation.

Running the EVL core

Recipe for the impatient

-

read this document about building the EVL core and libevl.

-

boot your EVL-enabled kernel.

-

write your first application code using the libevl API. You may find the following bits useful, particularly when discovering the system:

-

what does initializing an EVL application entail.

-

how to have POSIX threads run on the high-priority stage.

-

which is the proper calling context for each EVL service from this API.

-

The process for getting the EVL core running on your target system can be summarized as follows (click on the steps to open the related documentation):

graph LR;

S("Build libevl") --> X["Install libevl"]

style S fill:#99ccff;

click S "/core/build-steps#building-libevl"

X --> A["Build kernel"]

click A "/core/build-steps#building-evl-core"

A --> B["Install kernel"]

style A fill:#99ccff;

B --> C["Boot target"]

style C fill:#ffffcc;

C --> U["Run evl check"]

style U fill:#ffffcc;

click U "/core/commands#evl-check-command"

U --> UU{OK?}

style UU fill:#fff;

UU -->|Yes| D["Run unit tests"]

UU -->|No| R[Fix Kconfig]

click R "/core/caveat"

style R fill:#99ccff;

R --> A

style D fill:#ffffcc;

click D "/core/testing#evl-unit-testing"

D --> L{OK?}

style L fill:#fff;

L -->|Yes| E["Test with 'hectic'"]

L -->|No| Z["Report upstream"]

style Z fill:#ff420e;

click E "/core/testing#hectic-program"

click Z "https://subspace.kernel.org/lists.linux.dev.html"

E --> M{OK?}

style M fill:#fff;

M -->|Yes| F["Calibrate timer"]

M -->|No| Z

click F "/core/runtime-settings#calibrate-core-timer"

style E fill:#ffffcc;

F --> G["Test with 'latmus'"]

style F fill:#ffffcc;

style G fill:#ffffcc;

click G "/core/testing#latmus-program"

G --> N{OK?}

style N fill:#fff;

N -->|Yes| O["Go celebrate"]

N -->|No| Z

style O fill:#33cc66;

Once the EVL core runs on your target system, you can go directly to step #3 of the quick recipe above.